Chaos, Macro Quantum, & Cognition

An exploration of the idea cognition, and language as our best window into cognition, are a dynamic expansion of the world not a compression of the world.

Presentations

Dynamic Language Model - Bangkok, 2024

Winning presentation to Nerdconf hackathon. Exploring dynamic approaches to language modeling

Video • 2024AGI-21 5 Minute Summary

Brief overview of key concepts in artificial general intelligence research

Video • 2021AGI-21 Workshop presentation cued to 'One more thing'

Workshop presentation, with extended discussion session

Video • 2021

Vector Parser - Slides from AGI-21 Presentation

Slides from both main session and workshop presentations

Research • 2021

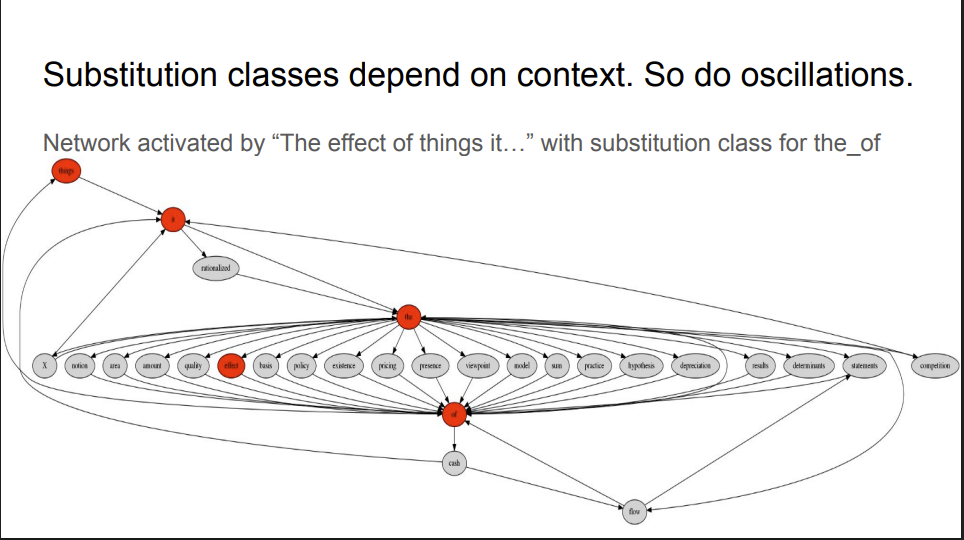

2003 - Wave Linguistics

Radical analogy between wave dynamics in physics, and linguistic category. Presentation made to Nik Kasabov's research group at AUT, Auckland, NZ, 2003.

Research • 2003Podcasts

Entangled Things

2022 interview with the Entangled Things podcast on a (macro) quantum interpretation of different assemblies of data • Audio • Transcript

Media • Various

X Discussion on LLM Solutions

Discussion with @SoorenaSedighi on fixing LLMs through contradictory meaning, synchronous oscillations, and alternative approaches to AI. Audio • Transcript

Discussion • 2024Projects & Discussions

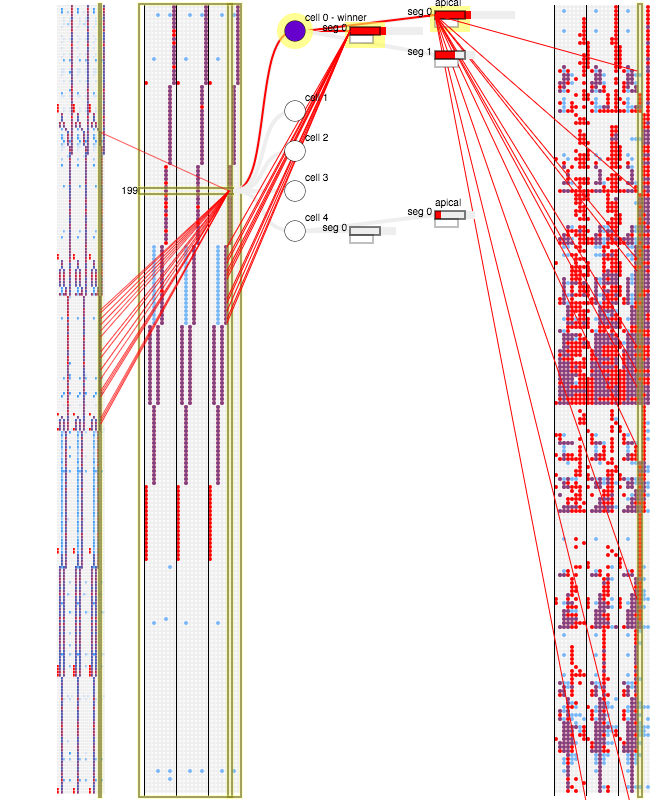

2015 - Proof of concept coding language sequences in Felix's Clojure port of Jeff Hawkins' CLA sequence coding network

Felix Andrews' write-up of our 2015 collaborative work on sequence coding using HTM principles. Trying to find ways to implement ad-hoc clustering of network representations of sequences

Code • 2015

HTM Theory Forum

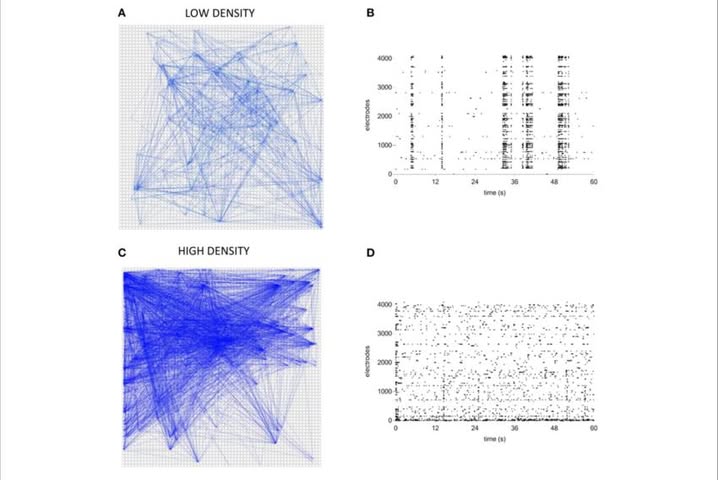

Attempt to test my oscillation structuring ideas implemented as a Github project by Compl Yue in 2023. Discussions on Jeff Hawkins' HTM Theory forum

Forum • Research

Oscillating Networks for AI

Facebook group for discussing the possible significance of oscillating networks for artificial intelligence

Code • Community